Microsoft DP-600 Implementing Analytics Solutions Using Microsoft Fabric Exam Practice Test

Implementing Analytics Solutions Using Microsoft Fabric Questions and Answers

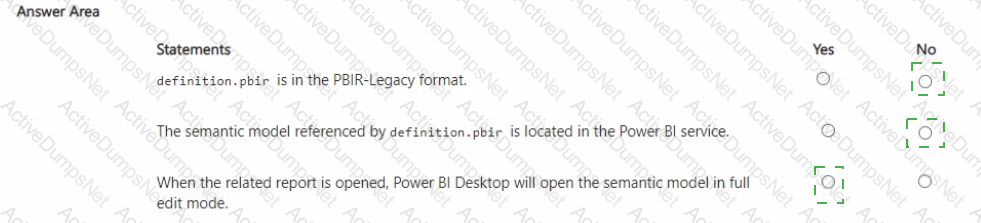

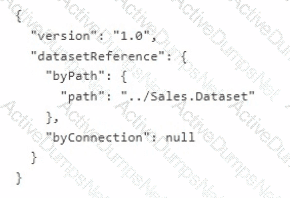

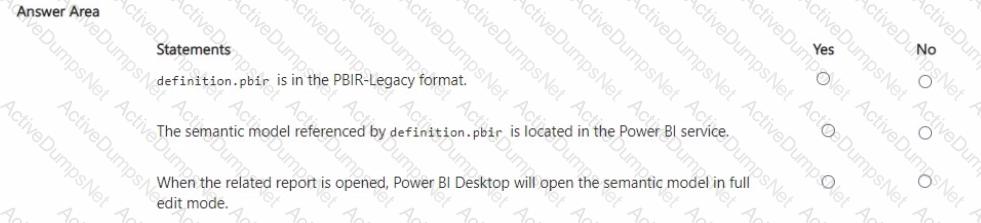

You have a Microsoft Power Bl project that contains a file named definition.pbir. definition.pbir contains the following JSON.

For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point.

You have a Fabric tenant that contains two workspaces named Woritspace1 and Workspace2. Workspace1 contains a lakehouse named Lakehouse1. Workspace2 contains a lakehouse named Lakehouse2. Lakehouse! contains a table named dbo.Sales. Lakehouse2 contains a table named dbo.Customers.

You need to ensure that you can write queries that reference both dbo.Sales and dbo.Customers in the same SQL query without making additional copies of the tables.

What should you use?

Note: This section contains one or more sets of questions with the same scenario and problem. Each question presents a unique solution to the problem. You must determine whether the solution meets the stated goals. More than one solution in the set might solve the problem. It is also possible that none of the solutions in the set solve the problem.

After you answer a question in this section, you will NOT be able to return. As a result, these questions do not appear on the Review Screen.

Your network contains an on-premises Active Directory Domain Services (AD DS) domain named contoso.com that syncs with a Microsoft Entra tenant by using Microsoft Entra Connect.

You have a Fabric tenant that contains a semantic model.

You enable dynamic row-level security (RLS) for the mode! and deploy the model to the Fabric service.

You query a measure that includes the username () function, and the query returns a blank result.

You need to ensure that the measure returns the user principal name (UPNJ of a user.

Solution: You add user objects to the list of synced objects in Microsoft Entra Connect.

Does this meet the goal?

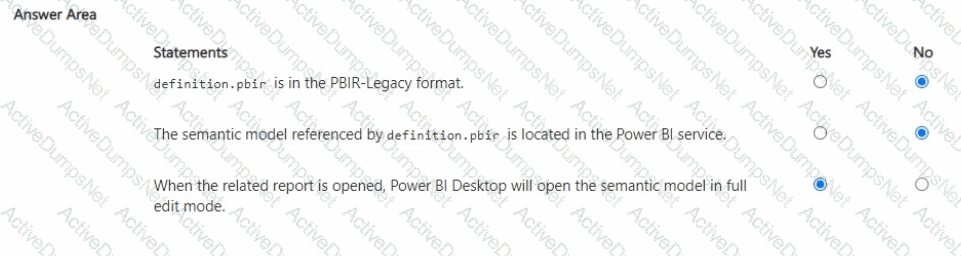

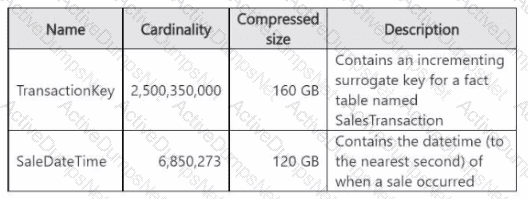

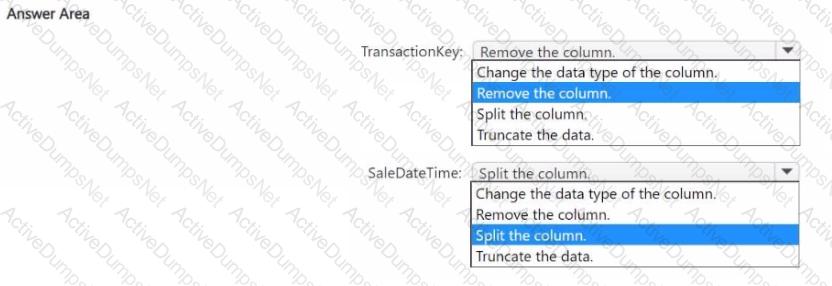

You have a Fabric tenant that contains a semantic model named model1. The two largest columns in model1 are shown in the following table.

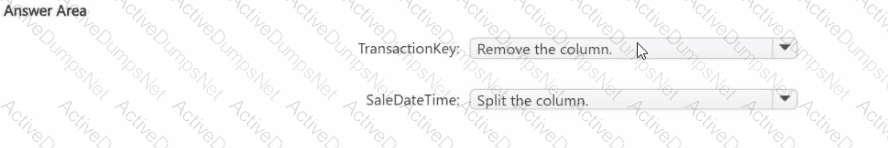

You need to optimize model 1. The solution must meet the following requirements:

• Reduce the model size.

• Increase refresh performance when using Import mode.

• Ensure that the datetime value for each sales transaction is available in the model.

What should you do on each column? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You have a Fabric tenant that contains a workspace named Workspace^ Workspacel is assigned to a Fabric capacity.

You need to recommend a solution to provide users with the ability to create and publish custom Direct Lake semantic models by using external tools. The solution must follow the principle of least privilege.

Which three actions in the Fabric Admin portal should you include in the recommendation? Each correct answer presents part of the solution.

NOTE: Each correct answer is worth one point.

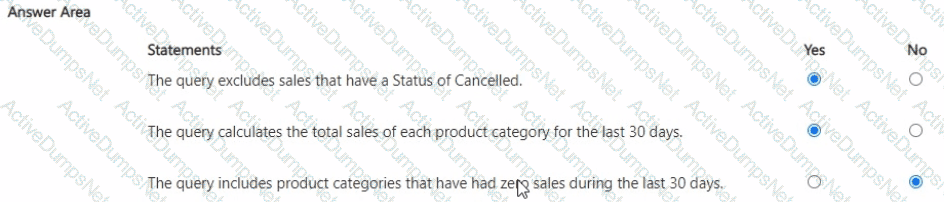

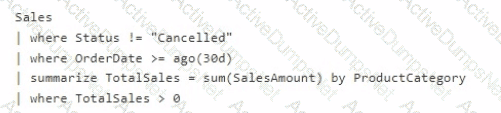

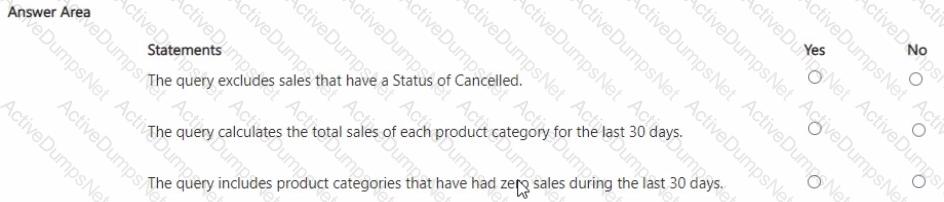

You have the following KQL query.

For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point.

You have a Fabric tenant that contains a workspace named Workspace1. Workspace1 contains a single semantic model that has two Microsoft Power BI reports.

You have a Microsoft 365 subscription that contains a data loss prevention (DLP) policy named DLP1.

You need to apply DLP1 to the items in Workspace1.

What should you do?

You have a Fabric workspace named Workspace1.

You need to create a semantic model named Model1 and publish Model1 to Workspace1. The solution must meet the following requirements:

Can revert to previous versions of Model1 as required.

Identifies differences between saved versions of Model1.

Uses Microsoft Power BI Desktop to publish to Workspace1.

Can edit item definition files by using Microsoft Visual Studio Code.

Which two actions should you perform? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

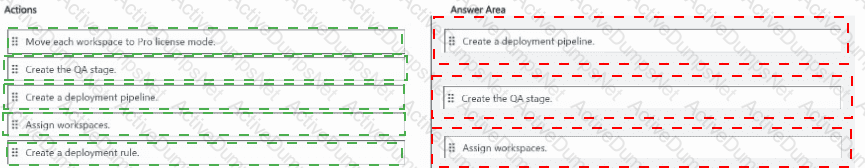

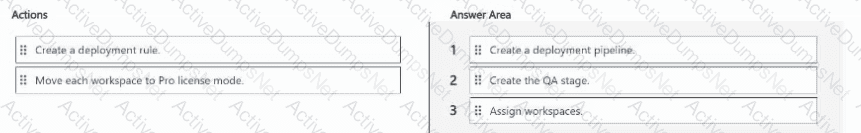

You have Fabric tenant that contains four workspaces named Development, Test, QA, and Production. All the workspaces are in Premium Per User (PPU) license mode.

You plan to use a release pipeline to support the development lifecycle from Development to Production.

Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

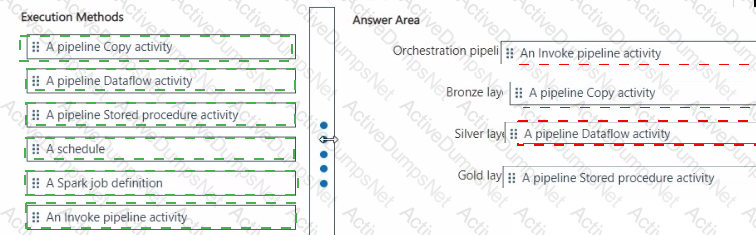

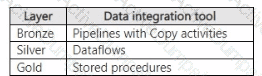

You are implementing a medallion architecture in a single Fabric workspace.

You have a lakehouse that contains the Bronze and Silver layers and a warehouse that contains the Gold layer.

You create the items required to populate the layers as shown in the following table.

You need to ensure that the layers are populated daily in sequential order such that Silver is populated only after Bronze is complete, and Gold is populated only after Silver is complete. The solution must minimize development effort and complexity.

What should you use to execute each set of items? To answer, drag the appropriate options to the correct items. Each option may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content

NOTE: Each correct selection is worth one point.

NO: 76

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You have a Fabric tenant that contains a lakehouse named Lakehousel. Lakehousel contains a Delta table named Customer.

When you query Customer, you discover that the query is slow to execute. You suspect that maintenance was NOT performed on the table.

You need to identify whether maintenance tasks were performed on Customer.

Solution: You run the following Spark SQL statement:

DESCRIBE DETAIL customer

Does this meet the goal?

You have a Fabric tenant that contains a Microsoft Power Bl report.

You are exploring a new semantic model.

You need to display the following column statistics:

• Count

• Average

• Null count

• Distinct count

• Standard deviation

Which Power Query function should you run?

You have a Fabric workspace named Workspace1 that contains an eventstream named Eventstream1.

Eventstream1 reads data from an Azure event hub named Eventhub1.

Eventhub1 contains the following columns.

Name

Data type

MachineId

Int

Payload

Dynamic

Datetime

Datetime

Location

String

You need to add a continuous percentile calculation to the Payload column. The solution must minimize development effort.

What should you do?

You need to provide Power Bl developers with access to the pipeline. The solution must meet the following requirements:

• Ensure that the developers can deploy items to the workspaces for Development and Test.

• Prevent the developers from deploying items to the workspace for Production.

• Follow the principle of least privilege.

Which three levels of access should you assign to the developers? Each correct answer presents part of the solution. NOTE: Each correct answer is worth one point.

You have a Fabric tenant that contains a warehouse.

You use a dataflow to load a new dataset from OneLake to the warehouse.

You need to add a Power Query step to identify the maximum values for the numeric columns.

Which function should you include in the step?

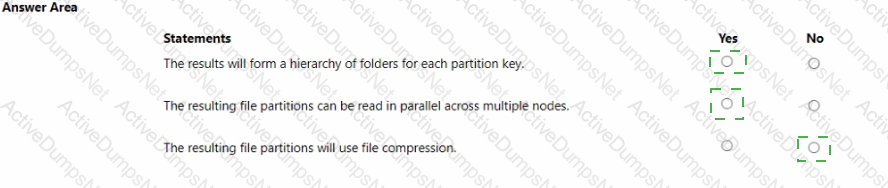

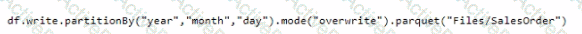

You have a Fabric tenant that contains a lakehouse.

You are using a Fabric notebook to save a large DataFrame by using the following code.

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

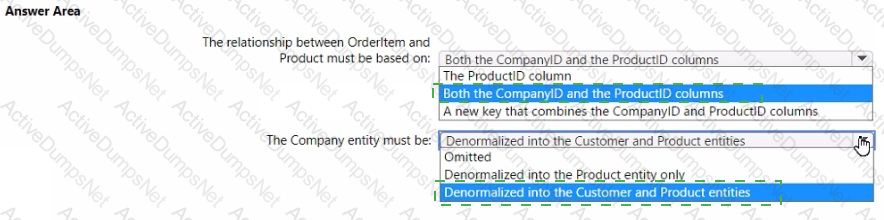

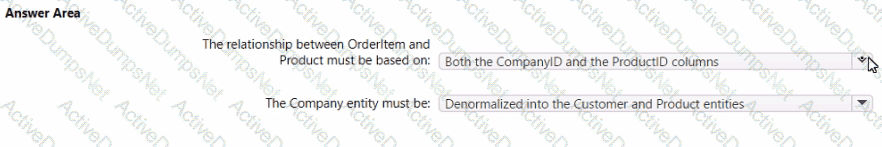

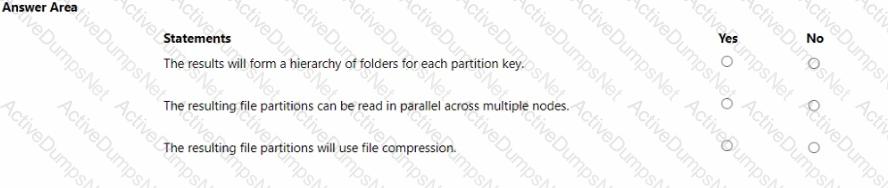

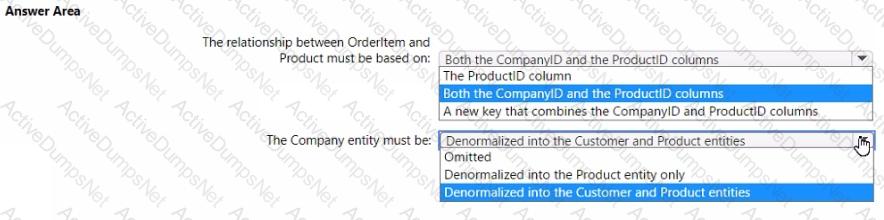

You have the source data model shown in the following exhibit.

The primary keys of the tables are indicated by a key symbol beside the columns involved in each key.

You need to create a dimensional data model that will enable the analysis of order items by date, product, and customer.

What should you include in the solution? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

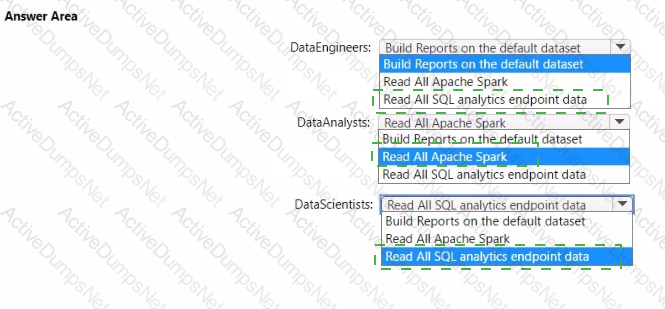

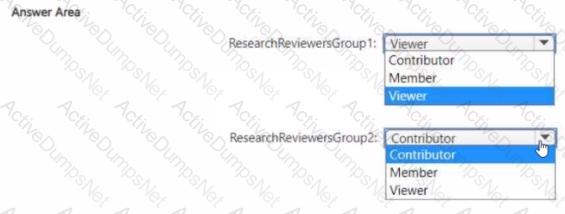

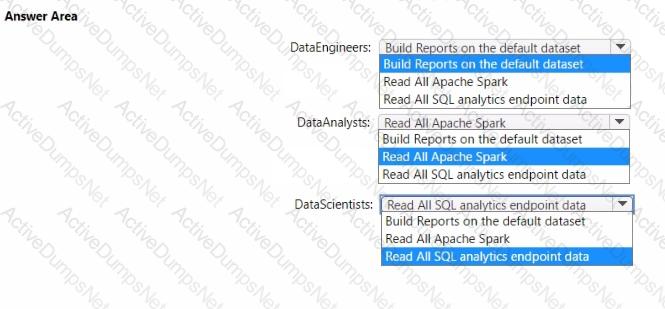

Which workspace rote assignments should you recommend for ResearchReviewersGroupl and ResearchReviewersGroupZ? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You need to refresh the Orders table of the Online Sales department. The solution must meet the semantic model requirements. What should you include in the solution?

What should you use to implement calculation groups for the Research division semantic models?

You need to ensure that Contoso can use version control to meet the data analytics requirements and the general requirements. What should you do?

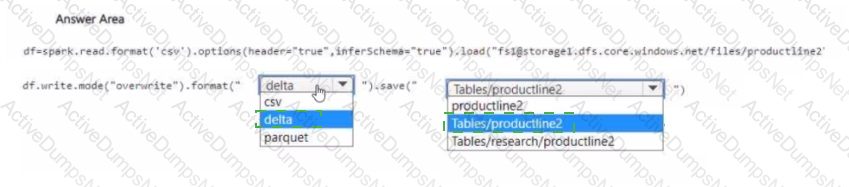

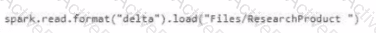

You need to migrate the Research division data for Productline2. The solution must meet the data preparation requirements. How should you complete the code? To answer, select the appropriate options in the answer area

NOTE: Each correct selection is worth one point.

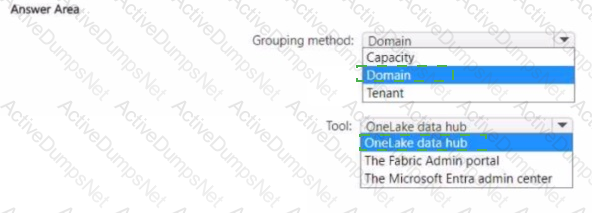

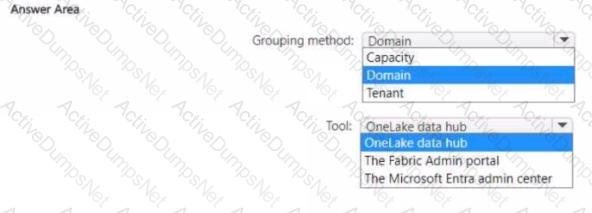

You need to recommend a solution to group the Research division workspaces.

What should you include in the recommendation? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Which syntax should you use in a notebook to access the Research division data for Productlinel?

A)

B)

C)

D)

You need to recommend which type of fabric capacity SKU meets the data analytics requirements for the Research division. What should you recommend?

What should you recommend using to ingest the customer data into the data store in the AnatyticsPOC workspace?

You to need assign permissions for the data store in the AnalyticsPOC workspace. The solution must meet the security requirements.

Which additional permissions should you assign when you share the data store? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You need to ensure the data loading activities in the AnalyticsPOC workspace are executed in the appropriate sequence. The solution must meet the technical requirements.

What should you do?

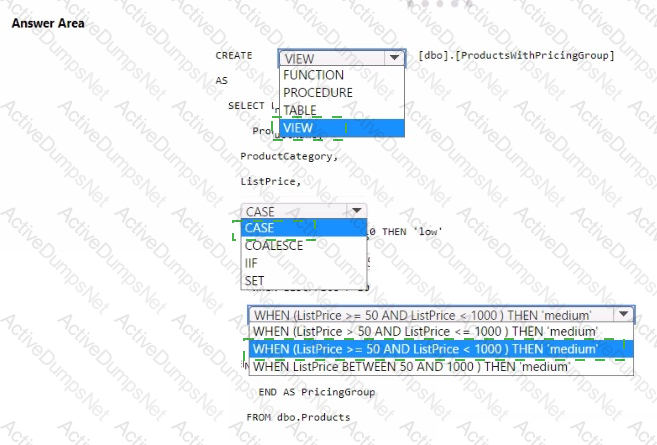

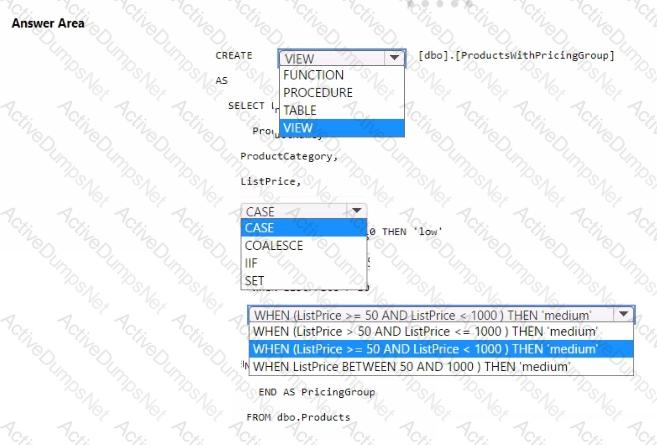

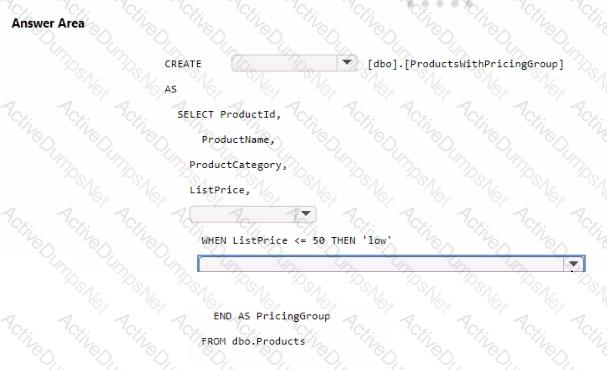

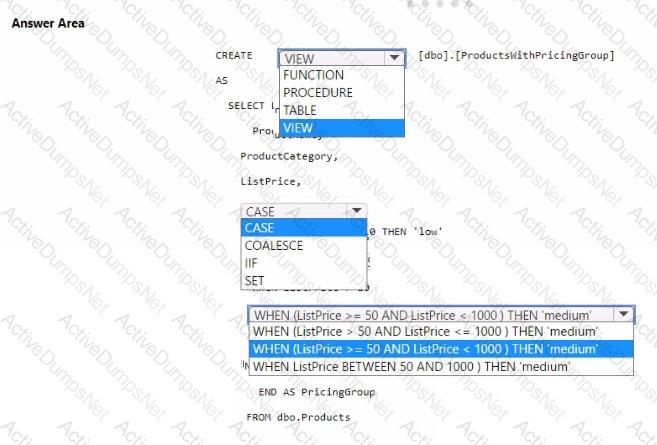

You need to resolve the issue with the pricing group classification.

How should you complete the T-SQL statement? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

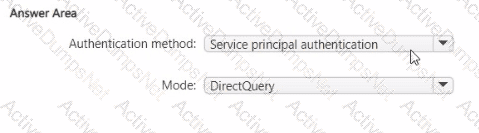

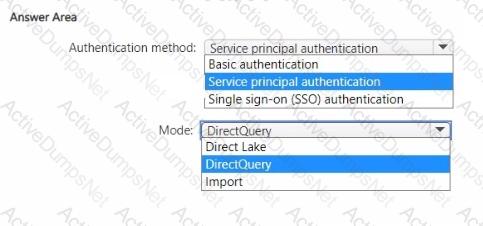

You need to design a semantic model for the customer satisfaction report.

Which data source authentication method and mode should you use? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You need to implement the date dimension in the data store. The solution must meet the technical requirements.

What are two ways to achieve the goal? Each correct answer presents a complete solution.

NOTE: Each correct selection is worth one point.

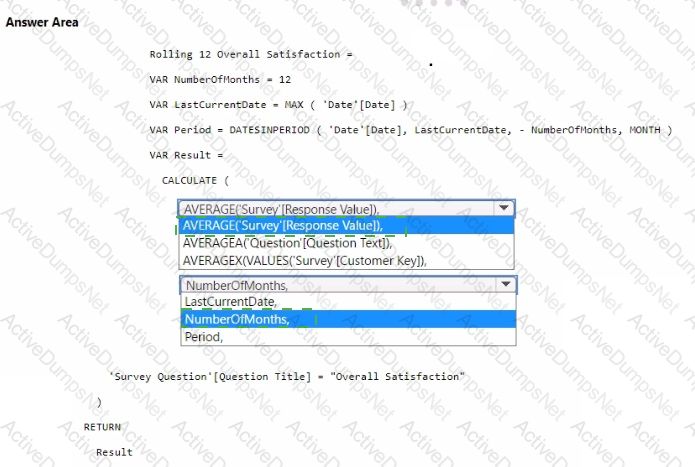

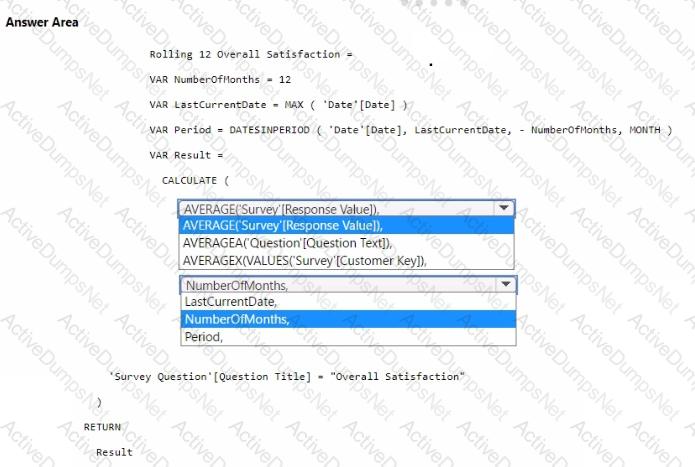

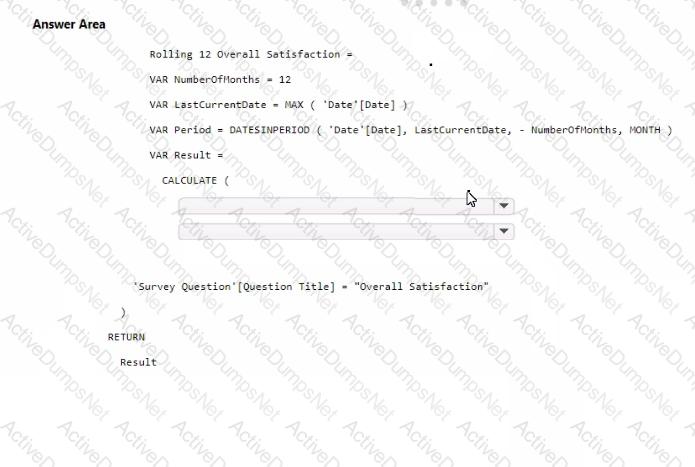

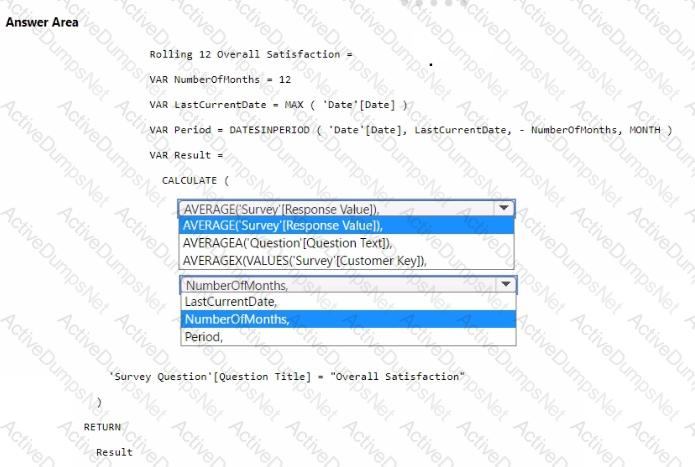

You need to create a DAX measure to calculate the average overall satisfaction score.

How should you complete the DAX code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You need to recommend a solution to prepare the tenant for the PoC.

Which two actions should you recommend performing from the Fabric Admin portal? Each correct answer presents part of the solution.

NOTE: Each correct answer is worth one point.

Which type of data store should you recommend in the AnalyticsPOC workspace?